From c4a1d67dba8fe5517d161fdfe20b67c78ded5911 Mon Sep 17 00:00:00 2001

From: Doanh B C <56221762+caodoanh2001@users.noreply.github.com>

Date: Wed, 19 Jun 2024 00:44:03 +0900

Subject: [PATCH] Update and rename announcement_miccai2024 to

announcement_miccai2024.md

---

_news/announcement_miccai2024 | 24 ------------------------

_news/announcement_miccai2024.md | 19 +++++++++++++++++++

2 files changed, 19 insertions(+), 24 deletions(-)

delete mode 100644 _news/announcement_miccai2024

create mode 100644 _news/announcement_miccai2024.md

diff --git a/_news/announcement_miccai2024 b/_news/announcement_miccai2024

deleted file mode 100644

index ee77106..0000000

--- a/_news/announcement_miccai2024

+++ /dev/null

@@ -1,24 +0,0 @@

----

-layout: post

-title: One paper has been accepted at MICCAI2024

-date: 2024-06-17 00:00:00

-description: One paper has been accepted at MICCAI2024

-tags: formatting links

-categories: sample-posts

-inline: false

----

-

-FALFormer: Feature-aware Landmarks self-attention for Whole-slide Image Classification

-

-

-Authors: Doanh C. Bui, Trinh T. L. Vuong, Jin Tae Kwak

-

-

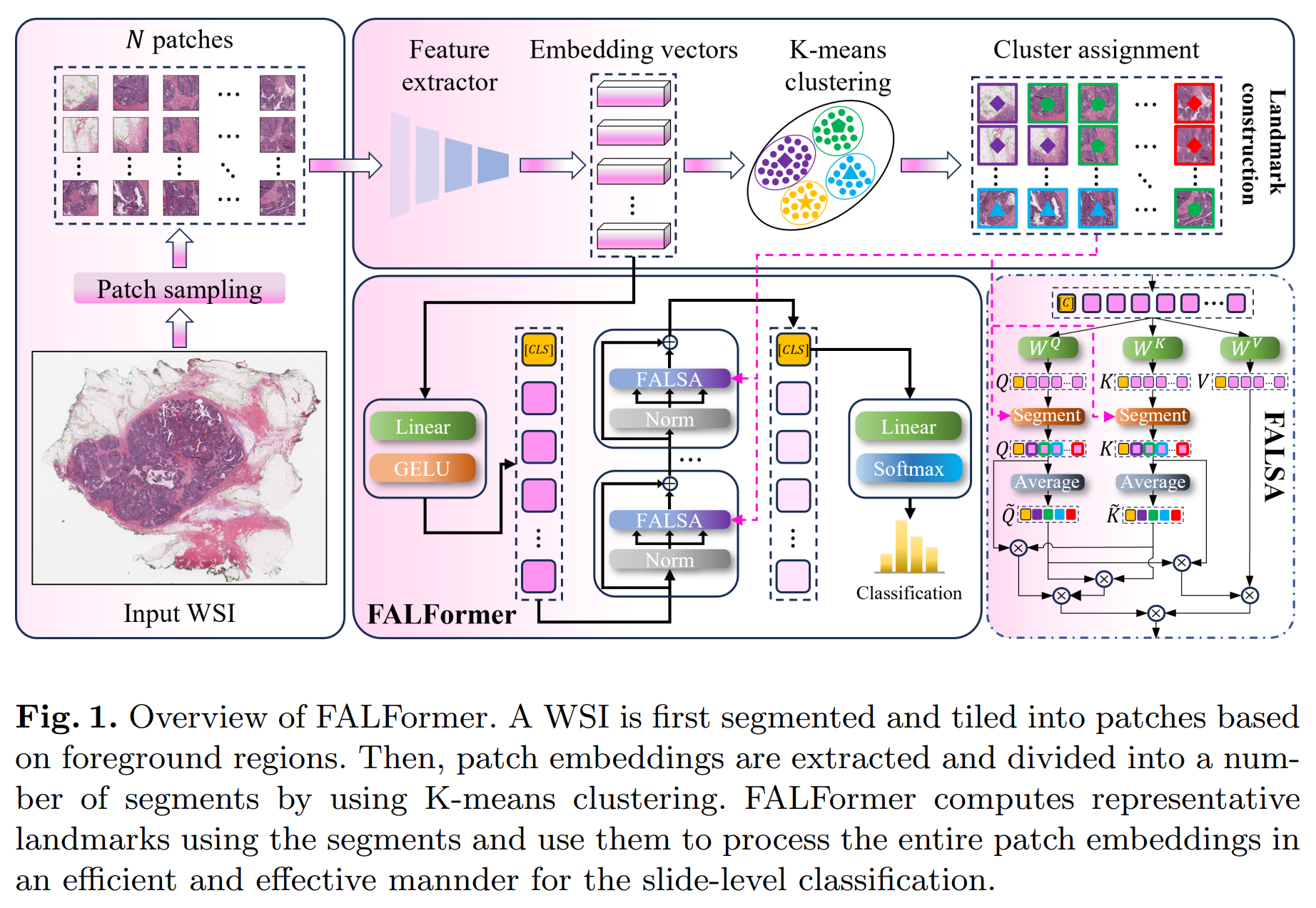

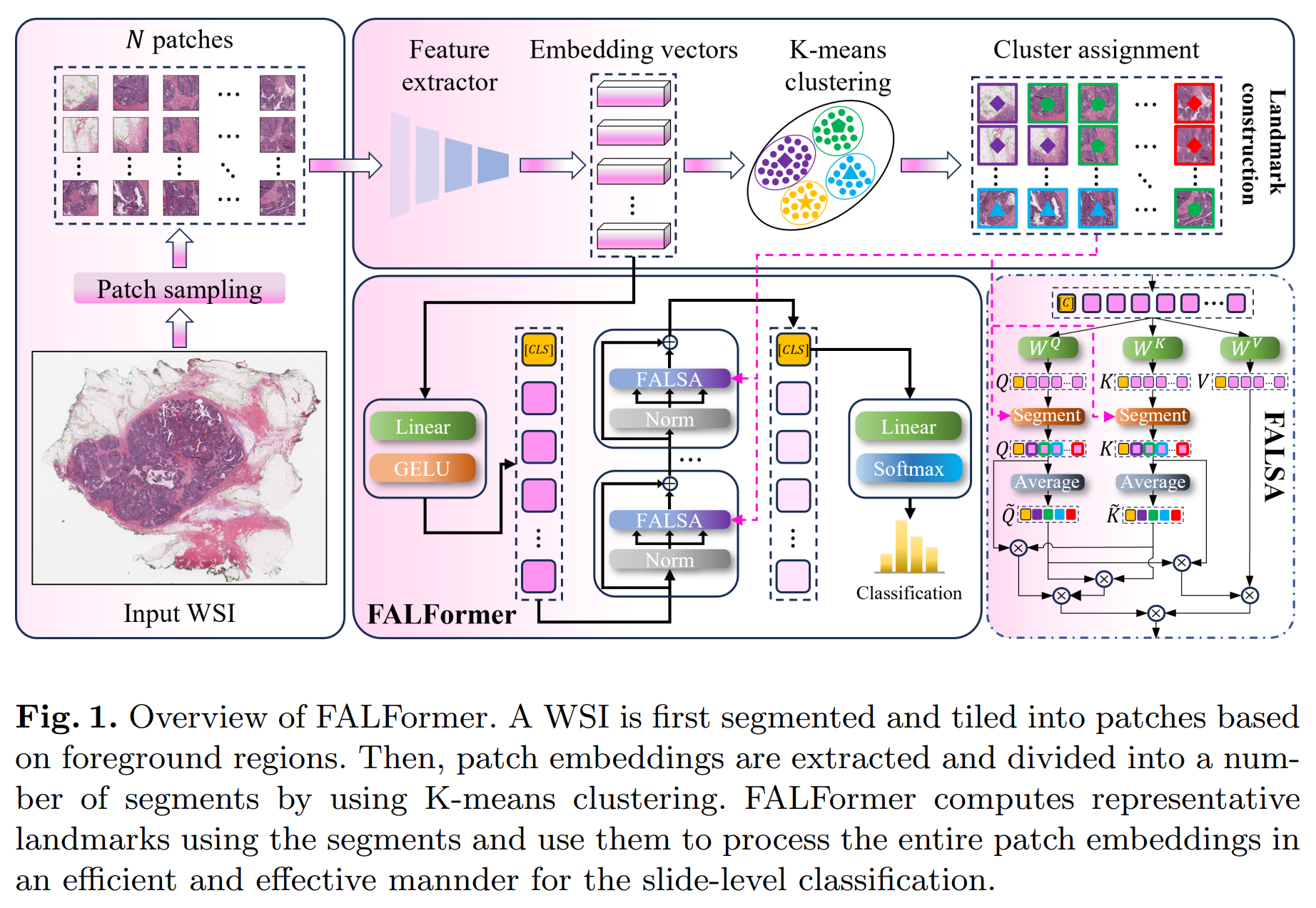

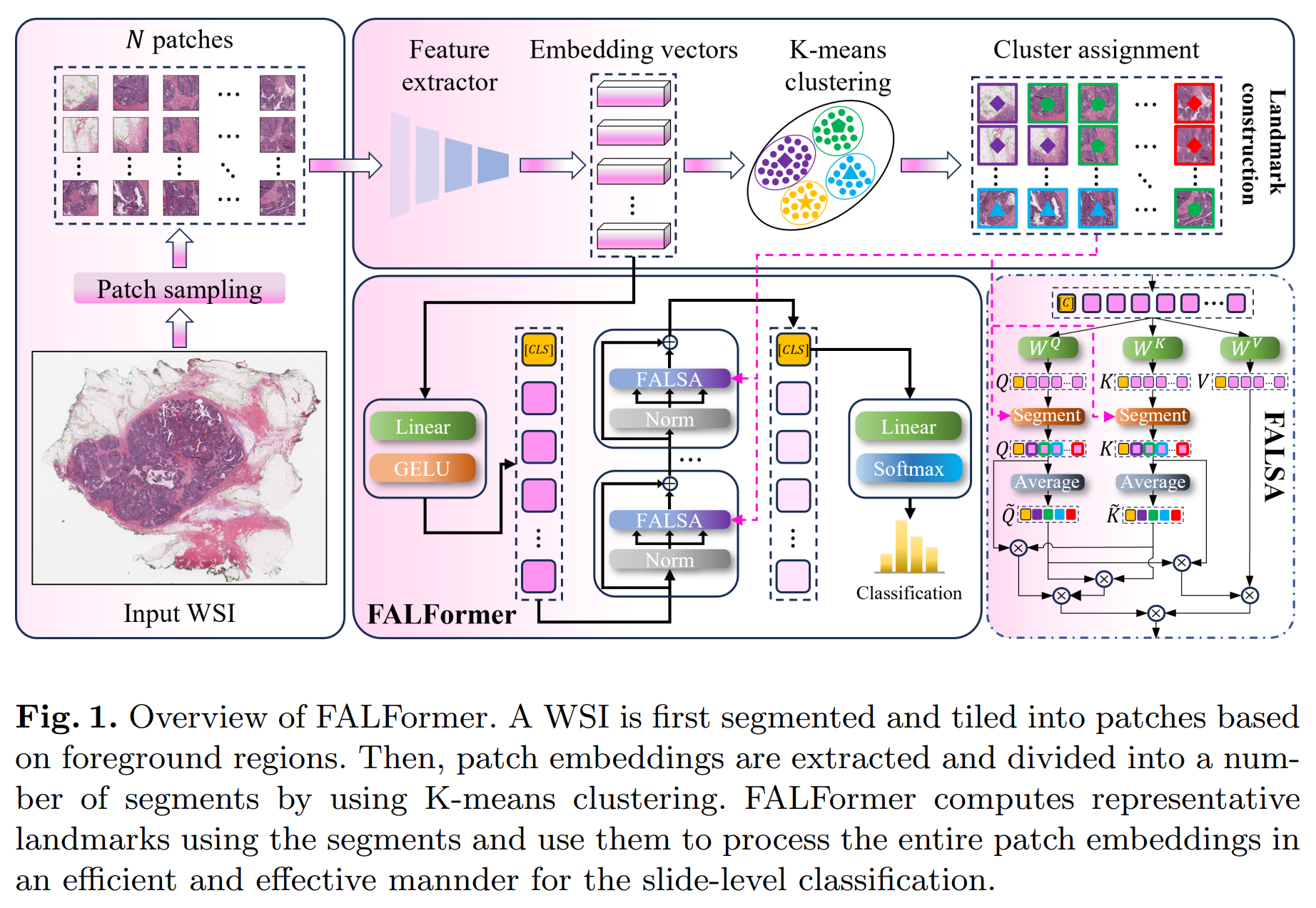

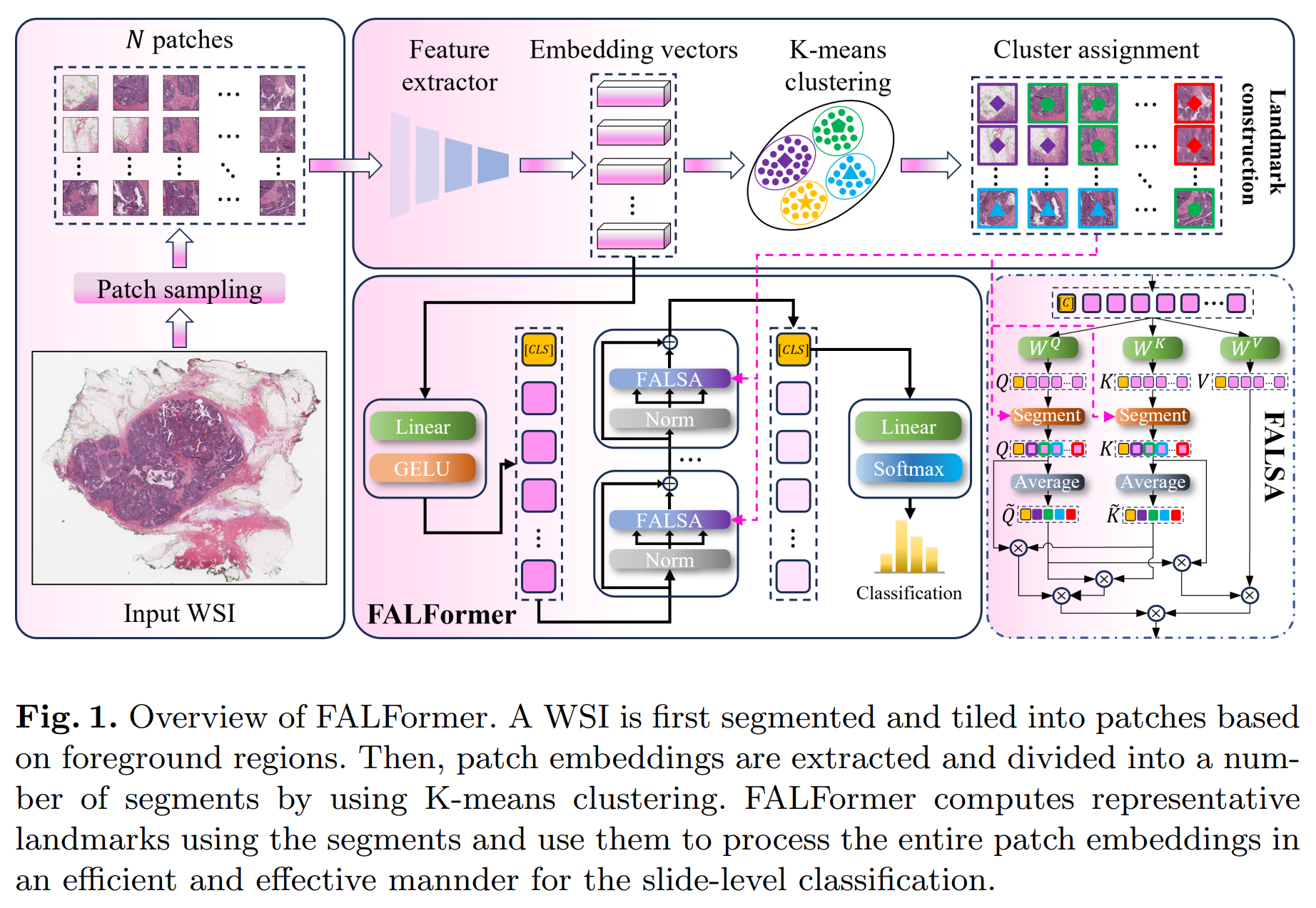

-Abstract: Slide-level classification for whole-slide images (WSIs) has been widely recognized as a crucial problem in digital and computational pathology. Current approaches commonly consider WSIs as a bag of cropped patches and process them via multiple instance learning due to the large number of patches, which cannot fully explore the relationship among patches; in other words, the global information cannot be fully incorporated into decision making. Herein, we propose an efficient and effective slide-level classification model, named as FALFormer, that can process a WSI as a whole so as to fully exploit the relationship among the entire patches and to improve the classification performance. FALFormer is built based upon Transformers and self-attention mechanism. To lessen the computational burden of the original self-attention mechanism and to process the entire patches together in a WSI, FALFormer employs Nystrom self-attention which approximates the computation by using a smaller number of tokens or landmarks. For effective learning, FALFormer introduces feature-aware landmarks to enhance the representation power of the landmarks and the quality of the approximation. We systematically evaluate the performance of FALFormer using two public datasets, including CAMELYON16 and TCGA-BRCA. The experimental results demonstrate that FALFormer achieves superior performance on both datasets, outperforming the state-of-the-art methods for the slide-level classification. This suggests that FALFormer can facilitate an accurate and precise analysis of WSIs, potentially leading to improved diagnosis and prognosis on WSIs.

-

-

- -

-

-This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

-Paper and code will be released soon!

diff --git a/_news/announcement_miccai2024.md b/_news/announcement_miccai2024.md

new file mode 100644

index 0000000..8476e5b

--- /dev/null

+++ b/_news/announcement_miccai2024.md

@@ -0,0 +1,19 @@

+---

+layout: post

+title: One paper has been accepted at MICCAI2024

+date: 2024-06-17 00:00:00

+description: One paper has been accepted at MICCAI2024

+tags: formatting links

+categories: sample-posts

+inline: false

+---

+

+## FALFormer: Feature-aware Landmarks self-attention for Whole-slide Image Classification

+*Doanh C. Bui, Trinh T. L. Vuong, Jin Tae Kwak*

+

+**Abstract:** Slide-level classification for whole-slide images (WSIs) has been widely recognized as a crucial problem in digital and computational pathology. Current approaches commonly consider WSIs as a bag of cropped patches and process them via multiple instance learning due to the large number of patches, which cannot fully explore the relationship among patches; in other words, the global information cannot be fully incorporated into decision making. Herein, we propose an efficient and effective slide-level classification model, named as FALFormer, that can process a WSI as a whole so as to fully exploit the relationship among the entire patches and to improve the classification performance. FALFormer is built based upon Transformers and self-attention mechanism. To lessen the computational burden of the original self-attention mechanism and to process the entire patches together in a WSI, FALFormer employs Nystrom self-attention which approximates the computation by using a smaller number of tokens or landmarks. For effective learning, FALFormer introduces feature-aware landmarks to enhance the representation power of the landmarks and the quality of the approximation. We systematically evaluate the performance of FALFormer using two public datasets, including CAMELYON16 and TCGA-BRCA. The experimental results demonstrate that FALFormer achieves superior performance on both datasets, outperforming the state-of-the-art methods for the slide-level classification. This suggests that FALFormer can facilitate an accurate and precise analysis of WSIs, potentially leading to improved diagnosis and prognosis on WSIs.

+

+

-

-

-This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

-Paper and code will be released soon!

diff --git a/_news/announcement_miccai2024.md b/_news/announcement_miccai2024.md

new file mode 100644

index 0000000..8476e5b

--- /dev/null

+++ b/_news/announcement_miccai2024.md

@@ -0,0 +1,19 @@

+---

+layout: post

+title: One paper has been accepted at MICCAI2024

+date: 2024-06-17 00:00:00

+description: One paper has been accepted at MICCAI2024

+tags: formatting links

+categories: sample-posts

+inline: false

+---

+

+## FALFormer: Feature-aware Landmarks self-attention for Whole-slide Image Classification

+*Doanh C. Bui, Trinh T. L. Vuong, Jin Tae Kwak*

+

+**Abstract:** Slide-level classification for whole-slide images (WSIs) has been widely recognized as a crucial problem in digital and computational pathology. Current approaches commonly consider WSIs as a bag of cropped patches and process them via multiple instance learning due to the large number of patches, which cannot fully explore the relationship among patches; in other words, the global information cannot be fully incorporated into decision making. Herein, we propose an efficient and effective slide-level classification model, named as FALFormer, that can process a WSI as a whole so as to fully exploit the relationship among the entire patches and to improve the classification performance. FALFormer is built based upon Transformers and self-attention mechanism. To lessen the computational burden of the original self-attention mechanism and to process the entire patches together in a WSI, FALFormer employs Nystrom self-attention which approximates the computation by using a smaller number of tokens or landmarks. For effective learning, FALFormer introduces feature-aware landmarks to enhance the representation power of the landmarks and the quality of the approximation. We systematically evaluate the performance of FALFormer using two public datasets, including CAMELYON16 and TCGA-BRCA. The experimental results demonstrate that FALFormer achieves superior performance on both datasets, outperforming the state-of-the-art methods for the slide-level classification. This suggests that FALFormer can facilitate an accurate and precise analysis of WSIs, potentially leading to improved diagnosis and prognosis on WSIs.

+

+ +

+This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

+Paper and code will be released soon!

+

+This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

+Paper and code will be released soon!

-

-

-This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

-Paper and code will be released soon!

diff --git a/_news/announcement_miccai2024.md b/_news/announcement_miccai2024.md

new file mode 100644

index 0000000..8476e5b

--- /dev/null

+++ b/_news/announcement_miccai2024.md

@@ -0,0 +1,19 @@

+---

+layout: post

+title: One paper has been accepted at MICCAI2024

+date: 2024-06-17 00:00:00

+description: One paper has been accepted at MICCAI2024

+tags: formatting links

+categories: sample-posts

+inline: false

+---

+

+## FALFormer: Feature-aware Landmarks self-attention for Whole-slide Image Classification

+*Doanh C. Bui, Trinh T. L. Vuong, Jin Tae Kwak*

+

+**Abstract:** Slide-level classification for whole-slide images (WSIs) has been widely recognized as a crucial problem in digital and computational pathology. Current approaches commonly consider WSIs as a bag of cropped patches and process them via multiple instance learning due to the large number of patches, which cannot fully explore the relationship among patches; in other words, the global information cannot be fully incorporated into decision making. Herein, we propose an efficient and effective slide-level classification model, named as FALFormer, that can process a WSI as a whole so as to fully exploit the relationship among the entire patches and to improve the classification performance. FALFormer is built based upon Transformers and self-attention mechanism. To lessen the computational burden of the original self-attention mechanism and to process the entire patches together in a WSI, FALFormer employs Nystrom self-attention which approximates the computation by using a smaller number of tokens or landmarks. For effective learning, FALFormer introduces feature-aware landmarks to enhance the representation power of the landmarks and the quality of the approximation. We systematically evaluate the performance of FALFormer using two public datasets, including CAMELYON16 and TCGA-BRCA. The experimental results demonstrate that FALFormer achieves superior performance on both datasets, outperforming the state-of-the-art methods for the slide-level classification. This suggests that FALFormer can facilitate an accurate and precise analysis of WSIs, potentially leading to improved diagnosis and prognosis on WSIs.

+

+

-

-

-This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

-Paper and code will be released soon!

diff --git a/_news/announcement_miccai2024.md b/_news/announcement_miccai2024.md

new file mode 100644

index 0000000..8476e5b

--- /dev/null

+++ b/_news/announcement_miccai2024.md

@@ -0,0 +1,19 @@

+---

+layout: post

+title: One paper has been accepted at MICCAI2024

+date: 2024-06-17 00:00:00

+description: One paper has been accepted at MICCAI2024

+tags: formatting links

+categories: sample-posts

+inline: false

+---

+

+## FALFormer: Feature-aware Landmarks self-attention for Whole-slide Image Classification

+*Doanh C. Bui, Trinh T. L. Vuong, Jin Tae Kwak*

+

+**Abstract:** Slide-level classification for whole-slide images (WSIs) has been widely recognized as a crucial problem in digital and computational pathology. Current approaches commonly consider WSIs as a bag of cropped patches and process them via multiple instance learning due to the large number of patches, which cannot fully explore the relationship among patches; in other words, the global information cannot be fully incorporated into decision making. Herein, we propose an efficient and effective slide-level classification model, named as FALFormer, that can process a WSI as a whole so as to fully exploit the relationship among the entire patches and to improve the classification performance. FALFormer is built based upon Transformers and self-attention mechanism. To lessen the computational burden of the original self-attention mechanism and to process the entire patches together in a WSI, FALFormer employs Nystrom self-attention which approximates the computation by using a smaller number of tokens or landmarks. For effective learning, FALFormer introduces feature-aware landmarks to enhance the representation power of the landmarks and the quality of the approximation. We systematically evaluate the performance of FALFormer using two public datasets, including CAMELYON16 and TCGA-BRCA. The experimental results demonstrate that FALFormer achieves superior performance on both datasets, outperforming the state-of-the-art methods for the slide-level classification. This suggests that FALFormer can facilitate an accurate and precise analysis of WSIs, potentially leading to improved diagnosis and prognosis on WSIs.

+

+ +

+This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

+Paper and code will be released soon!

+

+This work was conducted under the supervision of Prof. Jin Tae Kwak during my master's degree.

+Paper and code will be released soon!