中文 | 한국어 | 日本語 | Русский | Deutsch | Français | Español | Português | हिन्दी | العربية

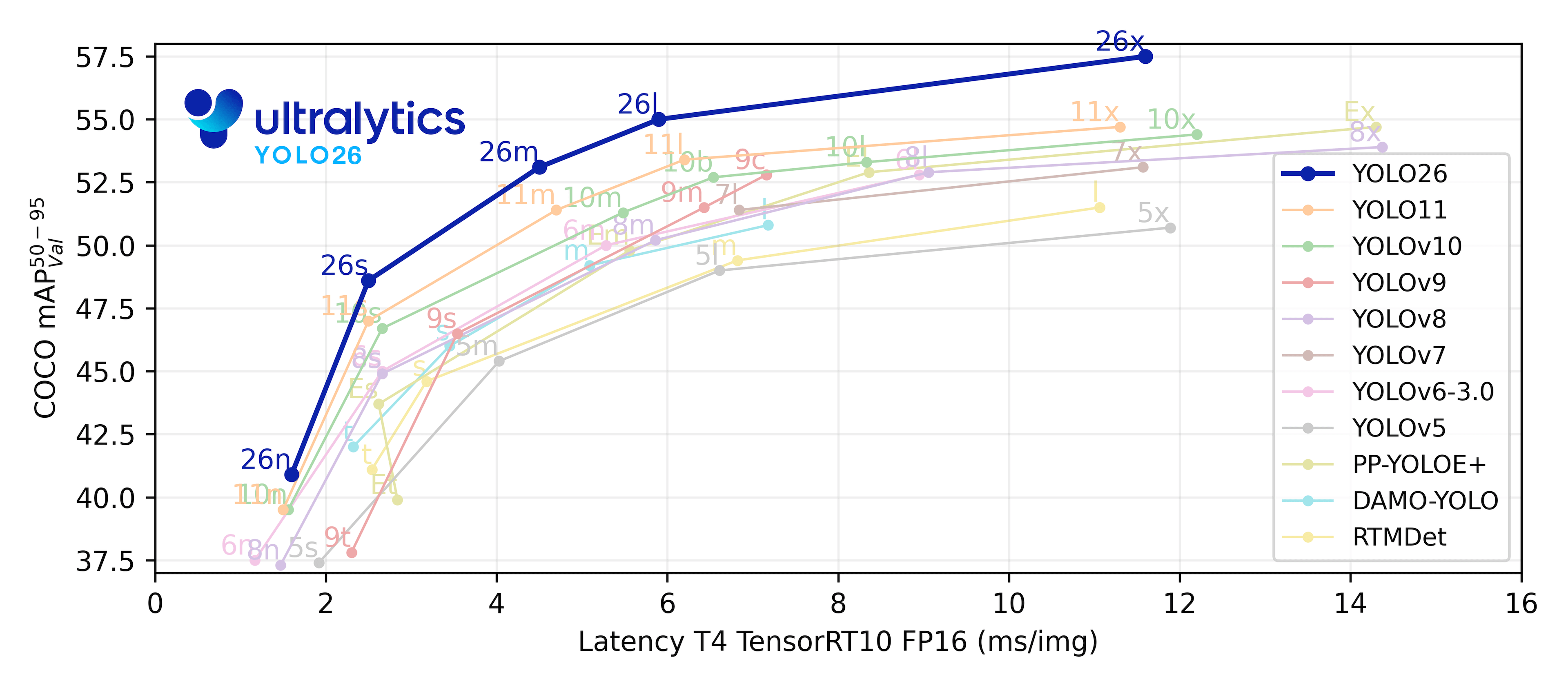

Ultralytics YOLOv8 is a cutting-edge, state-of-the-art (SOTA) model that builds upon the success of previous YOLO versions and introduces new features and improvements to further boost performance and flexibility. YOLOv8 is designed to be fast, accurate, and easy to use, making it an excellent choice for a wide range of object detection and tracking, instance segmentation, image classification and pose estimation tasks.

We hope that the resources here will help you get the most out of YOLOv8. Please browse the YOLOv8 Docs for details, raise an issue on GitHub for support, and join our Discord community for questions and discussions!

To request an Enterprise License please complete the form at Ultralytics Licensing.

See below for a quickstart installation and usage example, and see the YOLOv8 Docs for full documentation on training, validation, prediction and deployment for the original version. This forked version contains only a few additions, which are detailed in the section Forked code additions

Install

Pip install the ultralytics package including all requirements in a Python>=3.8 environment with PyTorch>=1.8.

pip install ultralyticsFor alternative installation methods including Conda, Docker, and Git, please refer to the Quickstart Guide.

Usage

YOLOv8 may be used directly in the Command Line Interface (CLI) with a yolo command:

yolo predict model=yolov8n.pt source='https://ultralytics.com/images/bus.jpg'yolo can be used for a variety of tasks and modes and accepts additional arguments, i.e. imgsz=640. See the YOLOv8 CLI Docs for examples.

YOLOv8 may also be used directly in a Python environment, and accepts the same arguments as in the CLI example above:

from ultralytics import YOLO

# Load a model

model = YOLO("yolov8n.yaml") # build a new model from scratch

model = YOLO("yolov8n.pt") # load a pretrained model (recommended for training)

# Use the model

model.train(data="coco128.yaml", epochs=3) # train the model

metrics = model.val() # evaluate model performance on the validation set

results = model("https://ultralytics.com/images/bus.jpg") # predict on an image

path = model.export(format="onnx") # export the model to ONNX formatSee YOLOv8 Python Docs for more examples.

This sections illustrates the changes added to main code in this fork.

There are three main additions:

- Custom augmentations: This permits the addition of new augmentations not present in the ultralytics pipeline.

- Excluding bounding boxes: This enables the training process to remove specific classes from the images.

- Biasing data using labels: This allows the user to select what classes are going to appear more freqently in the training.

These three additions can be used in the code as follows:

from ultralytics.ultralytics import YOLO

model = YOLO("yolov8n.yaml")

#if not self.standard_params.resume:

# self.model.args = hyper_params

#self.model.overrides = args.to_dict()

# The code seems to reset all the parameters of the models when training for some reason

# So, we need to pass them to override them

input_data = dict(getattr(model, 'args', model.model.args))

input_data['data'] = dataset

# New options for this forked code

input_data['cfg']['shuffle_class'] = True

input_data['cfg']['class_rate'] = generator_class_rate

input_data['cfg']['ambiguous_classes'] = ambiguous_classes

input_data['cfg']['mapping_classes'] = mapping_classes

input_data['cfg']['delete_cache'] = True # This will force the cache of the data to be removed. It is useful if the dataset has been updated, since ultralytic cahced the dataset

input_data['methods'] = {'custom_augmentation': custom_augmentation}

model.train(**input_data)The custom augmentation is a function that receives the label object of ultralytics (output of the model when predicting) and returns the same type of object. This object contains the image and the boxes, masks, etc... The bounding boxes are in the format center, width, height.

An illustration of an augmentation function is presented below:

def custom_augmentation(label):

bbox = label['instances'].bboxes

image = label['img']

.

.

.

# Output of the model is the same as the input, the image and bboxes, mask etc... must be modified and stored

return modified_labelIn order to perform the removal of bounding boxes from images the parameters ambiguous_classes and mapping_classes

are used. The purpose of this addition is the following: Imagine that we have a small dataset of people.

In this dataset, each individual person is labelled, but in the dataset there are also hands, heads and other parts of

the human body, but very few of them. In order to get an initial model, it would be convenient not to use these objects,

but we do want to keep using those images because complete complete persons may be in those images as well.

To solve this issue, this code allows the user to select a set of classes that are going to be removed, meaning the objects in the images will be replaced with random noise.

The bounding boxes in training and validation contain the classes that are being represented.

These classes are going to be integers from 0 to nc_complete, in contrast to nc as in the original code.

The nc represents the number of classes that are going to be detected whereas nc_complete is the total number of

classes in the dataset.

In addition, there will be a variable containing the classes that must be removed from the images, named

excluded_classes and a mapper that will change the class index of the classes to be detected so that the index is

continuous.

An example of these variables is shown below:

ambiguous_classes = [2, 3, 8, 9, 11]

mapping_classes = {0: 0, 1: 1, 4: 2, 5: 3, 6: 4, 7: 5, 10: 6, 12: 7}The nc_complete must be passed to the yaml file containing the dataset. An example of this yaml file is:

names:

- barrier

- barrier_angle

- barrier_connector

- barrier_pad

- manhole

- plastic_barrier

- plastic_barrier_angle

- water_barrier

nc: 8

nc_complete: 13

train: PATH/TO/training.txt

val: PATH/TO/validation.txt

Lastly, the shuffle_class and generator_class_rate are used to bias the dataset when needed.

It allows to pass the relative rate of each class.

For instance, if we have 3 classes: barrier, manhole and water_barrier and we want the class manhole to

be used twice as much as the other two since this class seems to be harder for the model to train it properly. Then,

generator_class_rate = {'barrier': 1, 'manhole': 2, 'water_barrier': 1}Notice the shuffle_class is used to radomly select data from a class or to use all the dataset as is.

So, if shuffle_class is False (default behaviour and the only one in the original code) then all the

dataset will be used, for instance, if there are 100 images (80 of class barrier and 20 of the rests)

the 100 images will be used per epoch. When shuffle_class is True, the training

will select each class with equal probability regardless of the number of images of each class. So, 'barrier',

'manhole', 'water_barrier' will be selected with the same probability even when 'barrier' is more common.

If generator_class_rate is provided then the classes will be selected following that rate instead of with

equal probability.

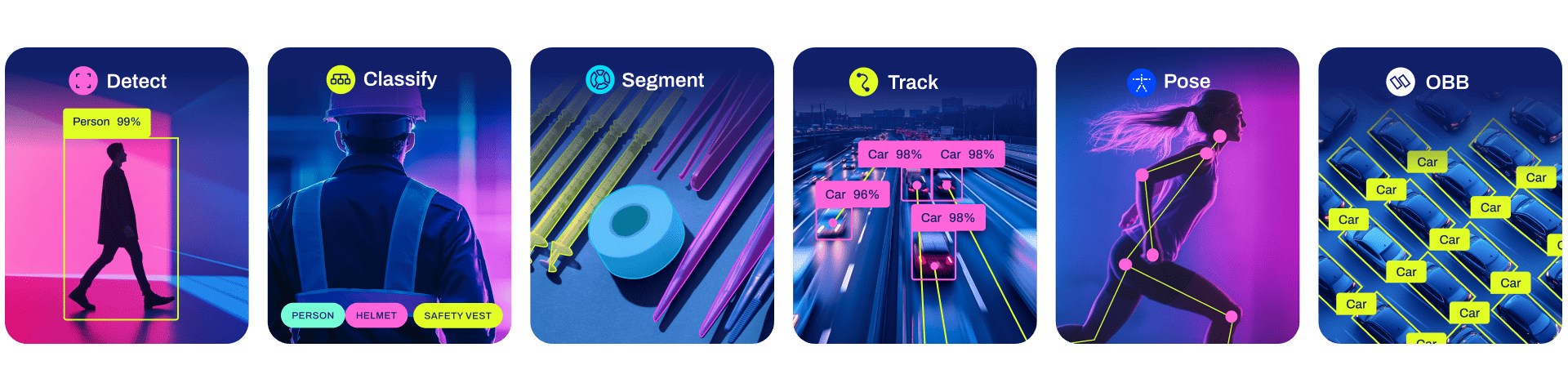

YOLOv8 Detect, Segment and Pose models pretrained on the COCO dataset are available here, as well as YOLOv8 Classify models pretrained on the ImageNet dataset. Track mode is available for all Detect, Segment and Pose models.

All Models download automatically from the latest Ultralytics release on first use.

Detection (COCO)

See Detection Docs for usage examples with these models trained on COCO, which include 80 pre-trained classes.

| Model | size (pixels) |

mAPval 50-95 |

Speed CPU ONNX (ms) |

Speed A100 TensorRT (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|

| YOLOv8n | 640 | 37.3 | 80.4 | 0.99 | 3.2 | 8.7 |

| YOLOv8s | 640 | 44.9 | 128.4 | 1.20 | 11.2 | 28.6 |

| YOLOv8m | 640 | 50.2 | 234.7 | 1.83 | 25.9 | 78.9 |

| YOLOv8l | 640 | 52.9 | 375.2 | 2.39 | 43.7 | 165.2 |

| YOLOv8x | 640 | 53.9 | 479.1 | 3.53 | 68.2 | 257.8 |

- mAPval values are for single-model single-scale on COCO val2017 dataset.

Reproduce byyolo val detect data=coco.yaml device=0 - Speed averaged over COCO val images using an Amazon EC2 P4d instance.

Reproduce byyolo val detect data=coco.yaml batch=1 device=0|cpu

Detection (Open Image V7)

See Detection Docs for usage examples with these models trained on Open Image V7, which include 600 pre-trained classes.

| Model | size (pixels) |

mAPval 50-95 |

Speed CPU ONNX (ms) |

Speed A100 TensorRT (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|

| YOLOv8n | 640 | 18.4 | 142.4 | 1.21 | 3.5 | 10.5 |

| YOLOv8s | 640 | 27.7 | 183.1 | 1.40 | 11.4 | 29.7 |

| YOLOv8m | 640 | 33.6 | 408.5 | 2.26 | 26.2 | 80.6 |

| YOLOv8l | 640 | 34.9 | 596.9 | 2.43 | 44.1 | 167.4 |

| YOLOv8x | 640 | 36.3 | 860.6 | 3.56 | 68.7 | 260.6 |

- mAPval values are for single-model single-scale on Open Image V7 dataset.

Reproduce byyolo val detect data=open-images-v7.yaml device=0 - Speed averaged over Open Image V7 val images using an Amazon EC2 P4d instance.

Reproduce byyolo val detect data=open-images-v7.yaml batch=1 device=0|cpu

Segmentation (COCO)

See Segmentation Docs for usage examples with these models trained on COCO-Seg, which include 80 pre-trained classes.

| Model | size (pixels) |

mAPbox 50-95 |

mAPmask 50-95 |

Speed CPU ONNX (ms) |

Speed A100 TensorRT (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLOv8n-seg | 640 | 36.7 | 30.5 | 96.1 | 1.21 | 3.4 | 12.6 |

| YOLOv8s-seg | 640 | 44.6 | 36.8 | 155.7 | 1.47 | 11.8 | 42.6 |

| YOLOv8m-seg | 640 | 49.9 | 40.8 | 317.0 | 2.18 | 27.3 | 110.2 |

| YOLOv8l-seg | 640 | 52.3 | 42.6 | 572.4 | 2.79 | 46.0 | 220.5 |

| YOLOv8x-seg | 640 | 53.4 | 43.4 | 712.1 | 4.02 | 71.8 | 344.1 |

- mAPval values are for single-model single-scale on COCO val2017 dataset.

Reproduce byyolo val segment data=coco-seg.yaml device=0 - Speed averaged over COCO val images using an Amazon EC2 P4d instance.

Reproduce byyolo val segment data=coco-seg.yaml batch=1 device=0|cpu

Pose (COCO)

See Pose Docs for usage examples with these models trained on COCO-Pose, which include 1 pre-trained class, person.

| Model | size (pixels) |

mAPpose 50-95 |

mAPpose 50 |

Speed CPU ONNX (ms) |

Speed A100 TensorRT (ms) |

params (M) |

FLOPs (B) |

|---|---|---|---|---|---|---|---|

| YOLOv8n-pose | 640 | 50.4 | 80.1 | 131.8 | 1.18 | 3.3 | 9.2 |

| YOLOv8s-pose | 640 | 60.0 | 86.2 | 233.2 | 1.42 | 11.6 | 30.2 |

| YOLOv8m-pose | 640 | 65.0 | 88.8 | 456.3 | 2.00 | 26.4 | 81.0 |

| YOLOv8l-pose | 640 | 67.6 | 90.0 | 784.5 | 2.59 | 44.4 | 168.6 |

| YOLOv8x-pose | 640 | 69.2 | 90.2 | 1607.1 | 3.73 | 69.4 | 263.2 |

| YOLOv8x-pose-p6 | 1280 | 71.6 | 91.2 | 4088.7 | 10.04 | 99.1 | 1066.4 |

- mAPval values are for single-model single-scale on COCO Keypoints val2017 dataset.

Reproduce byyolo val pose data=coco-pose.yaml device=0 - Speed averaged over COCO val images using an Amazon EC2 P4d instance.

Reproduce byyolo val pose data=coco-pose.yaml batch=1 device=0|cpu

Classification (ImageNet)

See Classification Docs for usage examples with these models trained on ImageNet, which include 1000 pretrained classes.

| Model | size (pixels) |

acc top1 |

acc top5 |

Speed CPU ONNX (ms) |

Speed A100 TensorRT (ms) |

params (M) |

FLOPs (B) at 640 |

|---|---|---|---|---|---|---|---|

| YOLOv8n-cls | 224 | 66.6 | 87.0 | 12.9 | 0.31 | 2.7 | 4.3 |

| YOLOv8s-cls | 224 | 72.3 | 91.1 | 23.4 | 0.35 | 6.4 | 13.5 |

| YOLOv8m-cls | 224 | 76.4 | 93.2 | 85.4 | 0.62 | 17.0 | 42.7 |

| YOLOv8l-cls | 224 | 78.0 | 94.1 | 163.0 | 0.87 | 37.5 | 99.7 |

| YOLOv8x-cls | 224 | 78.4 | 94.3 | 232.0 | 1.01 | 57.4 | 154.8 |

- acc values are model accuracies on the ImageNet dataset validation set.

Reproduce byyolo val classify data=path/to/ImageNet device=0 - Speed averaged over ImageNet val images using an Amazon EC2 P4d instance.

Reproduce byyolo val classify data=path/to/ImageNet batch=1 device=0|cpu

Our key integrations with leading AI platforms extend the functionality of Ultralytics' offerings, enhancing tasks like dataset labeling, training, visualization, and model management. Discover how Ultralytics, in collaboration with Roboflow, ClearML, Comet, Neural Magic and OpenVINO, can optimize your AI workflow.

| Roboflow | ClearML ⭐ NEW | Comet ⭐ NEW | Neural Magic ⭐ NEW |

|---|---|---|---|

| Label and export your custom datasets directly to YOLOv8 for training with Roboflow | Automatically track, visualize and even remotely train YOLOv8 using ClearML (open-source!) | Free forever, Comet lets you save YOLOv8 models, resume training, and interactively visualize and debug predictions | Run YOLOv8 inference up to 6x faster with Neural Magic DeepSparse |

Experience seamless AI with Ultralytics HUB ⭐, the all-in-one solution for data visualization, YOLOv5 and YOLOv8 🚀 model training and deployment, without any coding. Transform images into actionable insights and bring your AI visions to life with ease using our cutting-edge platform and user-friendly Ultralytics App. Start your journey for Free now!

We love your input! YOLOv5 and YOLOv8 would not be possible without help from our community. Please see our Contributing Guide to get started, and fill out our Survey to send us feedback on your experience. Thank you 🙏 to all our contributors!

Ultralytics offers two licensing options to accommodate diverse use cases:

- AGPL-3.0 License: This OSI-approved open-source license is ideal for students and enthusiasts, promoting open collaboration and knowledge sharing. See the LICENSE file for more details.

- Enterprise License: Designed for commercial use, this license permits seamless integration of Ultralytics software and AI models into commercial goods and services, bypassing the open-source requirements of AGPL-3.0. If your scenario involves embedding our solutions into a commercial offering, reach out through Ultralytics Licensing.

For Ultralytics bug reports and feature requests please visit GitHub Issues, and join our Discord community for questions and discussions!